This article originally appeared in the 2022 issue of Rice Engineering Magazine.

Research has been the engine driving Rice Engineering for more than a century. Inspired to solve the challenges facing humanity, Rice engineers pioneered nanotechnology by creating the world’s first “buckyballs” and helped create the first artificial heart. Today, Rice researchers are focused on addressing some of the most pressing problems we face — climate change, clean water, renewable energy, health care — and turning them into opportunities for innovation.

Rice Engineering has identified five key research areas: Engineering and Medicine, Cities of the Future, Energy and the Environment, Future Computing and Molecular Nanotechnology and Materials.

In the following pages, we highlight some of the work being done in these focus areas, as well as examples of the philanthropic partnerships supporting it.

Faster, noninvasive technique promising for brain disorders

Brain disorders are among the most difficult medical conditions to treat or cure. On average, it takes almost nine years to develop a new drug for the central nervous system, and they fail in 92% of cases.

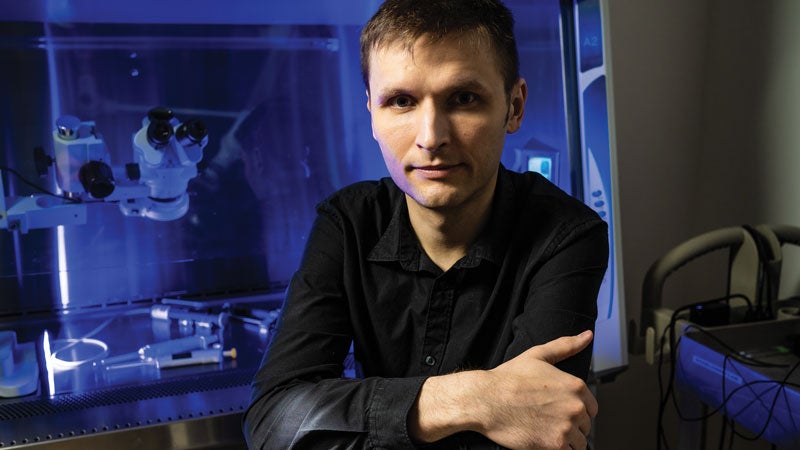

“How could we increase the success rate and speed up this process? This is the central question my laboratory is trying to solve,” said Jerzy Szablowski, assistant professor of bioengineering and a researcher in the Rice Neuroengineering Initiative.

Drug development is complicated, with multiple steps beginning with experiments in tissue culture followed by animal experiments. Most research projects use artificial animal models, which provide data more rapidly but fail to capture the entirety of the disease in humans.

“Drugs optimized for mice succeed in humans a fraction of the time,” Szablowski said. “Every time a drug fails, we have to start the process all over, which is both expensive and time consuming.” His lab has devised two strategies to avoid the high rate of failure.

In the first, Szablowski and his collaborators reduce the number of experiments involving tissue culture and mouse models, and pursue safe, ethical methods to learn the molecular basis of disease in humans. “If we were able to do that,” he said, “we could design drugs that treat the disease, not animal models.”

Second, why not avoid “going back to the drawing board every time,” as he puts it, and develop a platform therapy that can treat multiple diseases with only slight adjustments.

“Our goal is to develop methods for interfacing with individual cells in the intact brain. You could open the skull and attach an electrode or optical fiber to every cell, obtain a lot of information and control any disease-causing neurons, but you’d damage the very brain you’re treating. That’s the conundrum,” Szablowski said.

One of the techniques his group uses is focusing ultrasound energy to safely make the blood-brain barrier permeable. FUS-BBBO (Focused Ultrasound Blood-Brain Barrier Opening) has been used in humans to deliver therapeutic molecules from the bloodstream to the brain. The procedure also permits passage of proteins and other small molecules in the other direction — that is, from the brain into the bloodstream — where they can be readily sampled.

“We want to use FUS-BBBO to both obtain information about the molecules in the brain and to deliver genetic therapy that can control neuronal activity. The latter technology has already shown some success. Acoustically Targeted Chemogenetics (ATAC) is a technique that permits us to control specific brain regions with simple, safe drugs. The region is determined by a single noninvasive insonation procedure,” Szablowski said.

Think of ATAC as a platform. If clinicians want to treat epilepsy, they could deliver genetic therapy to the epilepsy focus.

“If we want to treat addiction, we could target the reward center. If we wanted to treat anorexia, we could target the feeding center of the brain. The spatial precision of ATAC may allow us to develop a therapy one time and only make small adjustments for different disorders. That approach could speed up therapy development and make the whole process less expensive,” he said.

Patients would undergo a single noninvasive outpatient procedure, and a few weeks later take a pill that controls only the targeted brain region.

“In our lab,” Szablowski said, “we develop other technologies, including finding markers for Parkinson’s disease, new ways to develop drugs faster through high-throughput screening, and more precise methods of targeting brain regions for gene therapy. All have one purpose – finding a way to make therapies for the brain cheaper and faster.”

Jerzy Szablowski received a five-year, $875,000 fellowship from the David and Lucile Packard Foundation, one of 20 given this year to early-career academics nationwide. He will use the money to develop non-invasive biomarkers for diagnosing and monitoring the progression of Parkinson’s disease, as well as PTSD and other brain disorders. Around the same time, Szablowski received a two-year, $447,510 grant from The Michael J. Fox Foundation for Parkinson’s Research, the largest nonprofit funder of Parkinson’s disease research in the world. Szablowski collaborates with researchers at Baylor College of Medicine.

Future cities and their infrastructure on the drawing board today

Of the five research focus areas identified by the George R. Brown School of Engineering, Cities of the Future perhaps sounds the most speculative, the most like science fiction.

“We have a huge opportunity to tackle pressing challenges at the intersection of urbanization, natural hazards, climate change and technology — to innovate and reimagine what our cities and their infrastructure might look like,” said Jamie Padgett, the Stanley C. Moore Professor in Engineering and chair of civil and environmental engineering (CEE).

The work is inherently interdisciplinary, and faculty from at least seven of the school’s engineering departments have already contributed to the plans.

“Given the amount of development in hazard-prone regions, such as along the coast, cities must be designed with resilience and climate change in mind,” Padgett said. “New approaches to adapt to these conditions while also considering the interdependence between social and technical systems are required.”

The Cities of the Future initiative explores ways to tackle such chronic problems as rising sea levels and more occasional stressors, like flooding. Understanding such factors as future climate conditions and their impact on cities is a prerequisite.

The work of Leonardo Dueñas-Osorio, professor of CEE, for instance, focuses on modeling structure and infrastructure system reliability in the face of natural hazards and deterioration. He has looked at power-grid failure, urban water security and the probability of homes being damaged by high winds or flooding during a hurricane.

“Our goal is to calculate all the things that could go wrong in a large, complex system like a modern city. If there are 100 things, what would happen if two or three or 50 fail, but you do not know which ones? If we cannot predict such things with precision, we at least want to be confident about the bounds,” Dueñas-Osorio said.

As an example of ongoing efforts in this area, the Severe Storm Prediction, Education, and Evacuation from Disasters (SSPEED) Center, founded at Rice in 2007, has been exploring strategies for protecting the greater Houston region from future storm events.

SSPEED has endorsed development of a Galveston Bay Park Plan, a chain of man-made islands that will serve as a hurricane barrier and a 10,000-acre public park, and is currently working with the City of Houston, Harris County Flood Control, the Port of Houston and an investor on the design and feasibility of the project.

Some “Cities of the Future” concepts are even more ambitious and “futuristic.” Padgett describes some of them:

“Think of smart city concepts that would monitor urban systems and harness the data revolution for improvements in operating conditions — transportation, for instance, and automation, the Internet of Things.”

The CEE department recently hired Kai Gong as an assistant professor, and he will join the faculty in January.

“His research,” Padgett said, “aims to help decarbonize important material flows by focusing on materials science problems related to buildings and infrastructure. His work combines simulations and advanced materials characterization with data-driven modeling.”

H. Russell Pitman ’58 has established the Paula and Reginald DesRoches Endowed Fellowship for Rice graduate students pursuing a degree in civil and environmental engineering. It is named in honor of Rice’s new president, former dean of engineering and civil engineer, and his wife. Pitman, who earned a degree in business administration from Rice and worked as a certified public accountant, is a longtime benefactor of the university. “I did this because I like Reggie,” Pitman said. “It’s just one of the kooky things I do.”

Making clean-burning fuel while decarbonizing the environment

In his recent Chevron Lecture on Energy, Matteo Pasquali described how carbon nanotube (CNT) synthesis from hydrocarbons could be used to decarbonize, or, in his coinage, “de-COx-ify,” material manufacturing while producing clean-burning hydrogen.

After all, it’s carbon dioxide rather than carbon itself that leads to global warming. Along with fossil fuel use for transportation, materials manufacturing (“making stuff”) is among the leading sources of CO2 emissions. Carbon dioxide capture and storage is one way to reduce such emissions, but it’s expensive and generates a huge waste stream that must be managed and stored.

“The science is settled and the opportunity is clear. We already know how to make materials without carbon-dioxide emissions: split hydrocarbons and make clean hydrogen and useful carbon materials. Now it’s time to get started,” said Pasquali, the A.J. Hartsook Professor of Chemical and Biomolecular Engineering, and director of Carbon Hub.

“That carbon can be part of the solution is already credible,” he said. “Twenty years ago we could only make carbon black, which has limited use and small markets. Now we can make a range of carbon materials to the major CO2 offenders: metals and construction materials. We just have to figure out a reliable way to scale up the processes and make them less expensive.”

Carbon Hub was launched in 2019 with a $10 million commitment from Shell and additional commitments from Prysmian, Mitsubishi Corporation Americas, Chevron and Huntsman to change how the world makes materials and uses hydrocarbons.

Instead of burning them and releasing carbon dioxide, while mining ores that must be processed with further carbon-dioxide emissions, Carbon Hub is dedicated to the zero-emissions goal of splitting hydrocarbons and creating clean-burning hydrogen fuel and solid carbon materials.

“The concept is simple,” Pasquali said. “We can make solid and usable carbon materials from hydrocarbons, so no emissions are ever generated.”

At the moment, the global market for conventional carbon products is small. Carbon in the form of nanotubes could potentially replace structural materials such as steel, aluminum and carbon fibers.

“It’s no longer a dream. This is something many of us have been doing for 20 years in the nanotech field,” Pasquali said. “We already know how to make the materials and achieve properties. We don’t know yet how to do it efficiently. Our goal is to make this transition happen fast.”

The Saudi Arabian Oil Co. (Aramco) has joined Carbon Hub with a $10 million, five-year sponsorship commitment, dedicated to accelerating energy transition by developing sustainable uses of hydrocarbons. With its SABIC (Saudi Basic Industries Corporation) partner, Aramco and other Carbon Hub members will develop carbon materials to potentially displace emissions-intensive materials across broad industry sectors. “With the strong participation and representation of Aramco and SABIC,” Matteo Pasquali said, “we will span multiple steps in the carbon value chain, from feedstock production to material manufacturing.”

Deep neural networks promise the ‘democratization of AI’

By developing artificial intelligence (AI) software that runs on commodity processors and trains deep neural networks 15 times faster than platforms based on graphics processors, Anshumali Shrivastava has brought the future of computing closer to reality.

“The cost of training is the bottleneck in AI. Companies are spending millions of dollars a week just to train and fine-tune their AI workloads,” said Shrivastava, assistant professor of computer science (CS).

Deep neural networks (DNN) are a powerful form of AI that can outperform humans at certain tasks. DNN training is typically a series of matrix multiplication operations, an ideal workload for graphics processing units (GPUs), which cost about three times more than general purpose central processing units (CPUs).

“The industry is fixated on one kind of improvement — faster matrix multiplications,” Shrivastava said. “Everyone is looking at specialized hardware and architectures to push matrix multiplication. People are now even talking about having specialized hardware-software stacks for specific kinds of deep learning. Instead of taking an expensive algorithm and throwing the whole world of system optimization at it, I’m saying, ‘Let’s revisit the algorithm.’”

Shrivastava’s startup company, Houston-based ThirdAI, pronounced “Third Eye,” is dedicated to building the next generation of scalable and sustainable AI tools and rewriting deep learning systems from scratch. ThirdAI’s accelerator builds hash-based processing algorithms for training and inference with neural networks. The technology is a result of 10 years of innovation in finding efficient mathematics for deep learning.

Shrivastava’s lab has recast DNN training as a search problem that could be solved with hash tables. Their “sub-linear deep learning engine” (SLIDE) is designed to run on commodity CPUs. Shrivastava and his collaborators at Intel have shown it could outperform GPU-based training.

The SLIDE deep-learning engine algorithm has demonstrated that ThirdAI can make Commodity x86 CPUs 15 times faster than most potent NVIDIA GPUs for training large neural networks.

“Hash table-based acceleration already outperforms GPU, but CPUs are also evolving,” said Shabnam Daghaghi, a collaborator with Shrivastava who earned his Ph.D. this year from Rice in electrical engineering and computer science. “We leveraged those innovations to take SLIDE even further, showing that if you aren’t fixated on matrix multiplications, you can leverage the power in modern CPUs and train AI models four to 15 times faster than the best specialized hardware alternative.”

“Democratization of AI,” Shrivastava added, “will happen when we can train AI on commodity hardware.”

Longtime Rice benefactors Ann ’73, ’74 and John Doerr ’73,’74 have provided a matching gift to fuel growth in high-demand areas of computer science. “Our goal is to encourage others to give,” Ann Doerr said. Among those who have answered the call is James Truchard, co-founder in 1976 of Austin-based National Instruments. Over the decades he has hired hundreds of Rice graduates, and his daughter Aimee Truchard earned a B.A. in English from Rice in 1996. The amount Truchard has given personally and through the Truchard Foundation totals almost $6.5 million. Also taking advantage of the Doerrs’ generosity is Vinay Pai, who earned his B.A. in computer science in 1988, and his B.S. and M.S. in electrical engineering in 1988 and 1991, respectively. He and his wife, Aarti have endowed the Vinay and Aarti Chair in Computer Science for a new faculty member who works in machine learning and AI.

Using machine learning to customize materials

The microscopic structures and the properties of materials are inherently linked, and determining their relations in order to engineer materials for useful applications is always difficult.

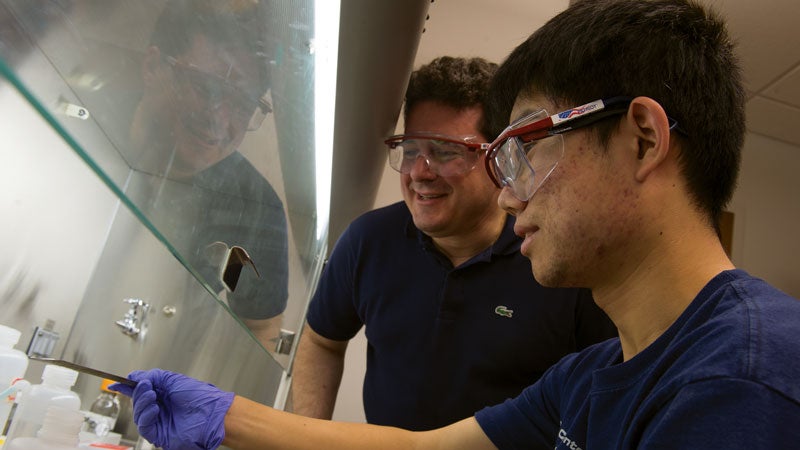

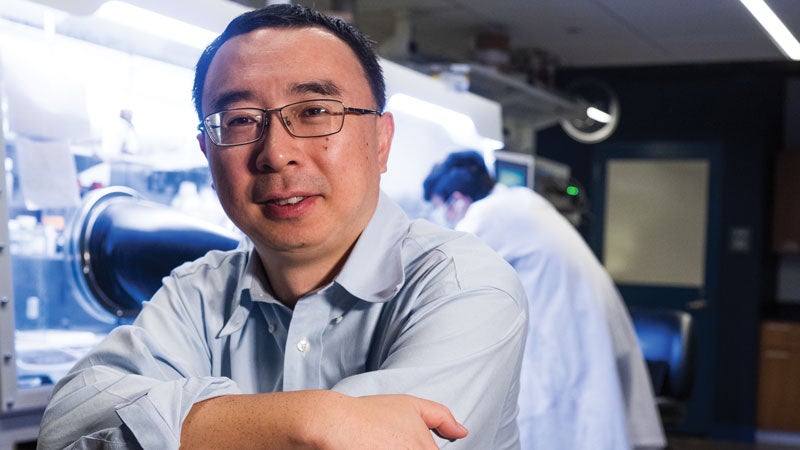

“My lab tries to simplify that process with the help of machine learning,” said Ming Tang, associate professor of materials science and nanoengineering.

To accomplish this task, Tang and his research group in collaboration with a physicist, Fei Zhou, at Lawrence Livermore National Laboratory, have developed a technique for predicting the evolution of microstructures -- structural features measuring between 10 nanometers and 100 microns -- in materials.

Tang and Zhou have demonstrated how neural networks -- computer models that mimic the brain’s neurons -- can be trained to predict how microstructures will grow in various environments. An example is how a snowflake forms from moisture.

“In modern material science, it’s widely accepted that microstructure plays a critical role in controlling a material’s properties,” he said. “You not only want to control how the atoms are arranged on lattices, but also what the microstructure looks like, to give you reliable performance and new functionality.

“The holy grail of designing materials is predicting how a microstructure will change under given conditions, whether heated or stressed or some other stimulation.”

Tang has spent years refining microstructure prediction. The traditional equation-based approach imposed limitations and often failed to keep up with demand for new materials.

“The tremendous progress in deep learning encouraged Fei at Lawrence Livermore and us to see if we could apply it to materials,” he said. “Deep-learning algorithms are good at seeing the correlations in a very complex way that the human mind cannot. We take advantage of that.”

In multiple tests, Tang’s group fed the networks between 1,000 and 2,000 sets of 20 successive images illustrating a material’s microstructure evolution as predicted by the equations. After training, the network was given from one to 10 images to predict the next 50 to 200 frames, and in most cases did so in seconds, hundreds of times faster than the equation-based approach.

“Based on the testing, we demonstrate the deep-learning model gives accurate predictions but is hundreds of times faster than the equation-based approach,” he said. “The most exciting thing is that the network can predict how the microstructure grows in situations it never ‘sees’ during the training process. This indicates the network already grasps the evolution rules and can completely replace the equation-based simulations.”

The computation efficiency of neural networks will accelerate development of novel materials. Tang expects these findings to aid in his lab’s ongoing design of more efficient batteries. “We’re thinking about novel three-dimensional electrode structures that will help charge and discharge batteries much faster than what we have now,” he said.

Sunit Patel graduated from Rice with degrees in chemical and biomolecular engineering (ChBE) and economics in 1985 and now serves as chief financial officer of Ibotta, a consumer technology company. He has endowed the Tina and Sunit Patel Professorship in Molecular Nanotechnology, now held by Mike Wong, chair of the ChBE department. While at Rice, Patel took classes with the late Robert Curl and often spoke with Richard Smalley. In 1995, they shared the Nobel Prize for chemistry for their discovery of buckyballs. “Thanks to them, nanotechnology holds much promise in the fields of energy transition, drug delivery and materials,” Patel said. Nancy Packer Carlson ’80 and Clint Carlson ’79,’82 established their second chair at Rice in the School of Engineering for Advanced Materials. Nancy, who holds her degrees in economics and sociology from Rice, served on the Board of Trustees for 12 years. Clint, a board member of the Rice Management Company, earned his B.A. and M.B.A. from Rice and founded Carlson Capital in 1993. Nancy and Clint are committed to advancing the research priorities of the university, in this case through the exploration and development of advanced materials.